How To Draw Qbert When He Is Happy

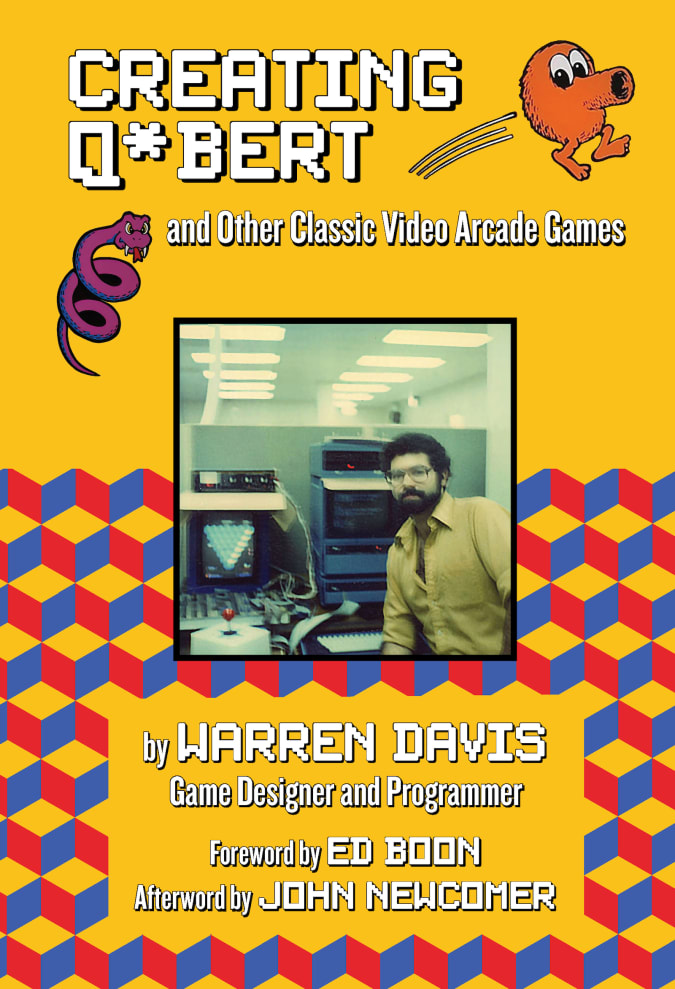

With modern consoles offering gamers graphics so photorealistic that they blur the line between CGI and reality, it's easy forget only how cartoonishly blocky they were in the 8-fleck era. In his new volume, Creating Q*Bert and Other Classic Arcade Games, legendary game designer and programmer Warren Davis recalls his halcyon days imagining and designing some of the biggest hits to ever grace an arcade. In the excerpt below, Davis explains how the industry made its technological leap from 8- to 12-bit graphics.

Santa Monica Printing

©2021 Santa Monica Press

Back at my regular twenty-four hours task, I became particularly fascinated with a new product that came out for the Amiga estimator: a video digitizer made by a company chosen A-Squared. Allow's unpack all that slowly.

The Amiga was a recently released dwelling computer capable of unprecedented graphics and audio: 4,096 colors! 8-flake stereo sound! There were paradigm manipulation programs for it that could do things no other computer, including the IBM PC, could practice. We had 1 at Williams not only because of its capabilities, simply also because our own Jack Haeger, an immensely talented creative person who'd worked on Sinistar at Williams a few years before, was too the art director for the Amiga design team.

Video digitization is the procedure of grabbing a video prototype from some video source, similar a camera or a videotape, and converting information technology into pixel data that a computer system (or video game) could use. A full-colour photograph might comprise millions of colors, many just subtly different from i another. Even though the Amiga could but brandish 4,096 colors, that was enough to see an image on its monitor that looked well-nigh perfectly photographic.

Our video game system still could only display 16 colors total. At that level, photographic images were but not possible. Simply we (and by that I mean everyone working in the video game industry) knew that would change. Equally retentiveness became cheaper and processors faster, we knew that 256-color systems would soon exist possible. In fact, when I started looking into digitized video, our hardware designer, Marker Loffredo, was already playing effectually with ideas for a new 256-color hardware system.

Let's talk about colour resolution for a second. Come on, you know y'all want to. No worries if y'all don't, though, you can skip these next few paragraphs if you like. Color resolution is the number of colors a reckoner organisation is capable of displaying. And it'southward all tied in to memory. For example, our video game arrangement could brandish 16 colors. Only artists weren't locked into sixteen specific colors. The hardware used a "palette." Artists could choose from a adequately broad range of colors, but just sixteen of them could be saved in the palette at any given fourth dimension. Those colors could be programmed to change while a game was running. In fact, irresolute colors in a palette dynamically allowed for a common technique used in old video games chosen "colour cycling."

For the hardware to know what color to display at each pixel location, each pixel on the screen had to be identified every bit one of those 16 colors in the palette. The drove of retention that contained the color values for every pixel on the screen was chosen "screen memory." Numerically, it takes 4 $.25 (one-half a byte) to represent 16 numbers (trust me on the math here), so if iv bits = 1 pixel, so i byte of memory could hold 2 pixels. By contrast, if y'all wanted to exist able to brandish 256 colors, it would take eight $.25 to represent 256 numbers. That's ane byte (or viii bits) per pixel.

So you'd need twice as much screen retentivity to brandish 256 colors as you would to brandish 16. Retentiveness wasn't cheap, though, and game manufacturers wanted to keep costs downwards as much as possible. So retention prices had to driblet before management approved doubling the screen retentivity.

Today we have for granted color resolutions of 24 bits per pixel (which potentially allows upward to 16,777,216 colors and true photographic quality). But dorsum then, 256 colors seemed like such a luxury. Even though it didn't arroyo the 4,096 colors of the Amiga, I was convinced that such a organisation could effect in close to photograph-realistic images. And the idea of having picture-quality images in a video game was very exciting to me, so I pitched to management the advantages of getting a head start on this technology. They agreed and bought the digitizer for me to play around with.

The Amiga's digitizer was crude. Very crude. Information technology came with a piece of hardware that plugged into the Amiga on one end, and to the video output of a black-and-white surveillance camera (sold separately) on the other. The camera needed to be mounted on a tripod so information technology didn't motility. Yous pointed it at something (that also couldn't move), and put a colour wheel between the camera and the subject. The color cycle was a circular piece of plastic divided into quarters with dissimilar tints: crimson, dark-green, blue, and clear.

When you started the digitizing procedure, a motor turned the colour wheel very slowly, and in about thirty to 40 seconds you had a full-color digitized image of your bailiwick. "Full-color" on the Amiga meant 4 bits of scarlet, green, and bluish—or 12-bit color, resulting in a total of iv,096 colors possible.

It'southward difficult to believe merely how exciting this was! At that time, it was similar something from scientific discipline fiction. And the coolness of it wasn't and so much how it worked (because information technology was pretty damn clunky) simply the potential that was at that place. The Amiga digitizer wasn't applied—the camera and subject needed to be still for so long, and the time it took to grab each image made the process listen-numbingly slow—but merely having the ability to produce 12-bit images at all enabled me to start exploring algorithms for colour reduction.

Color reduction is the process of taking an epitome with a lot of colors (say, up to the 16,777,216 possible colors in a 24-fleck prototype) and finding a smaller number of colors (say, 256) to best stand for that prototype. If you could exercise that, and so those 256 colors would form a palette, and every pixel in the prototype would be represented by a number—an "index" that pointed to 1 of the colors in that palette. As I mentioned earlier, with a palette of 256 colors, each index could fit into a single byte.

Just I needed an algorithm to effigy out how to choice the best 256 colors out of the thousands that might be nowadays in a digitized paradigm. Since there was no cyberspace back then, I went to libraries and began combing through academic journals and technical magazines, searching for enquiry done in this area. Eventually, I institute some! There were numerous papers written on the bailiwick, each outlining a different arroyo, some easier to understand than others. Over the next few weeks, I implemented a few of these algorithms for generating 256 color palettes using exam images from the Amiga digitizer. Some gave better results than others. Images that were inherently monochromatic looked the all-time, since many of the 256 colors could be allotted to different shades of a single color.

During this time, Loffredo was busy developing his 256-colour hardware. His plan was to back up multiple circuit boards, which could exist inserted into slots equally needed, much like a PC. A single board would requite you lot i surface aeroplane to draw on. A second board gave you 2 planes, foreground and background, and so on. With plenty planes, and by having each plane scroll horizontally at a slightly different charge per unit, you lot could give the illusion of depth in a side-scrolling game.

All was moving along smoothly until the 24-hour interval word came downwardly that Eugene Jarvis had completed his MBA and was returning to Williams to head up the video section. This was big news! I remember most people were pretty excited about this. I know I was, because despite our movement toward 256-colour hardware, the video section was notwithstanding without a strong leader at the helm. Eugene, given his already legendary status at Williams, was the perfect person to take the atomic number 82, partly because he had some strong ideas of where to take the department, and also due to direction'southward faith in him. Whereas everyone else would accept to convince management to go along with an thought, Eugene pretty much had card blanche in their eyes. Once he was dorsum, he told management what nosotros needed to do and they fabricated certain he, and we, had the resources to do information technology.

This meant, however, that Loffredo's planar hardware organization was toast. Eugene had his own ideas, and everyone speedily jumped on board. He wanted to create a 256-colour system based on a new CPU scrap from Texas Instruments, the 34010 GSP (Graphics Arrangement Processor). The 34010 was revolutionary in that it included graphics-related features within its cadre. Normally, CPUs would have no direct connection to the graphics portion of the hardware, though there might exist some co-processor to handle graphics chores (such every bit Williams' proprietary VLSI blitter). But the 34010 had that capability on board, obviating the need for a graphics co-processor.

Looking at the 34010's specs, all the same, revealed that the speed of its graphics functions, while well-suited for light graphics piece of work such as spreadsheets and discussion processors, was certainly not fast enough for pushing pixels the manner we needed. And so Marker Loffredo went back to the drawing lath to design a VLSI blitter chip for the new system.

Around this time, a new piece of hardware arrived in the marketplace that signaled the next generation of video digitizing. It was called the Image Capture Board (ICB), and it was developed past a grouping within AT&T called the EPICenter (which somewhen split up from AT&T and became Truevision). The ICB was one of three boards offered, the others being the VDA (Video Brandish Adapter, with no digitizing capability) and the Targa (which came in iii unlike configurations: eight-bit, 16-bit, and 24-bit). The ICB came with a slice of software called TIPS that immune you to digitize images and practise some minor editing on them. All of these boards were designed to plug in to an internal slot on a PC running MS-DOS, the original text-based operating system for the IBM PC. (Y'all may be wondering . . . where was Windows? Windows 1.0 was introduced in 1985, but it was terribly clunky and not widely used or accepted. Windows really didn't attain any kind of popularity until version 3.0, which arrived in 1990, a few years after the release of Truvision's boards.)

A picayune bit of trivia: the TGA file format that'due south withal around today (though not as popular as information technology in one case was) was created by Truevision for the TARGA series of boards. The ICB was a huge leap forward from the Amiga digitizer in that you lot could utilise a color video camera (no more than black-and-white camera or color wheel), and the time to take hold of a frame was drastically reduced—non quite instantaneous, every bit I think, simply just a second or 2, rather than xxx or forty seconds. And information technology internally stored colors equally 16-bits, rather than 12 similar the Amiga. This meant 5 $.25 each of ruby, green, and blue—the same that our game hardware used—resulting in a true-colour paradigm of upwards to 32,768 colors, rather than 4,096. Palette reduction would all the same exist a crucial step in the procedure. The greatest matter virtually the Truevision boards was they came with a Software Development Kit (SDK), which meant I could write my ain software to control the lath, tailoring it to my specific needs. This was truly amazing! In one case once again, I was so excited about the possibilities that my head was spinning.

I think information technology's safe to say that nearly people making video games in those days thought about the time to come. We realized that the speed and retentiveness limitations nosotros were forced to work nether were a temporary constraint. We realized that whether the video game industry was a fad or not, we were at the forefront of a new grade of storytelling. Maybe this was a little more true for me because of my interest in filmmaking, or peradventure non. But my experiences so far in the game industry fueled my imagination most what might come. And for me, the holy grail was interactive movies. The notion of telling a story in which the player was not a passive viewer but an active participant was extremely compelling. People were already experimenting with it nether the constraints of electric current engineering. Zork and the remainder of Infocom's text adventure games were probably the earliest examples, and more than would follow with every comeback in technology. Simply what I didn't know was if the engineering needed to achieve my finish goal—fully interactive movies with film-quality graphics—would e'er exist possible in my lifetime. I didn't dwell on these visions of the futurity. They were simply thoughts in my head. Yet, while it's nice to dream, at some point you lot've got to come back down to world. If yous don't have the one step in front of yous, y'all can be sure you lot'll never reach your ultimate destination, wherever that may be.

I dove into the chore and began learning the specific capabilities of the board, every bit well as its limitations. With the commencement iteration of my software, which I dubbed WTARG ("W" for Williams, "TARG" for TARGA), you could grab a single image from either a live photographic camera or a videotape. I added a few dissimilar palette reduction algorithms and so you could try each and notice the best palette for that paradigm. More chiefly, I added the power to find the best palette for a group of images, since all the images of an animation needed to have a consistent look. There was no blush key functionality in those early on boards, so artists would have to erase the background manually. I added some tools to assistance them do that.

This was a far cry from what I ultimately hoped for, which was a system where we could indicate a photographic camera at live actors and instantly take an animation of their action running on our game hardware. Just it was a start.

All products recommended by Engadget are selected by our editorial team, contained of our parent company. Some of our stories include affiliate links. If yous purchase something through ane of these links, nosotros may earn an affiliate committee.

Source: https://www.engadget.com/hitting-the-books-creating-qbert-and-other-classic-video-arcade-games-warren-davis-santa-monica-press-163011566.html

Posted by: eslickmaint1960.blogspot.com

0 Response to "How To Draw Qbert When He Is Happy"

Post a Comment